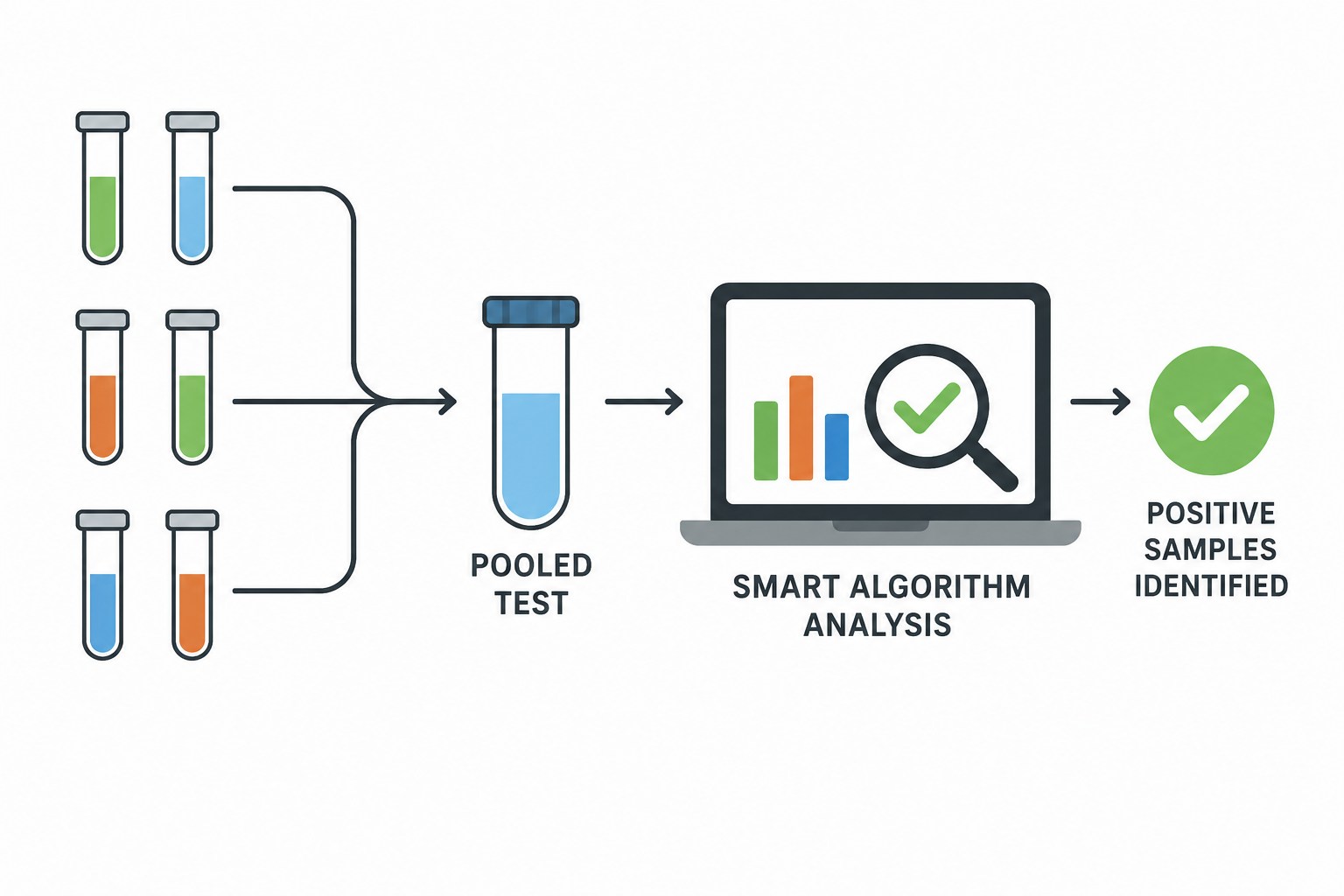

As excitement builds throughout health and information systems worldwide over the rich potential benefits of new tools generated by artificial intelligence (AI), the UN health agency on Tuesday called for action to ensure that patients are properly protected.

Cautionary measures normally applied to any new technology are not being exercised consistently with regard to large language model (LLM) tools, which use AI for crunching data, creating content, and answering questions, the World Health Organization (WHO) warned.

“Precipitous adoption of untested systems could lead to errors by healthcare workers, cause harm to patients, erode trust in AI, and thereby undermine or delay the potential long-term benefits and uses of such technologies around the world,” the agency said.

As such, the agency proposed that these concerns are addressed and clear evidence of benefits are measured before their widespread use in routine health care and medicine.

The risks must be examined carefully when using these new tools to improve access to health information, as a decision-support tool, or even to enhance diagnostic capacity in under-resourced settings to protect people’s health and reduce inequity, WHO said.

Committed to harnessing new technologies to improve human health, WHO recommends that policymakers ensure patient safety and protection as technology firms work to commercialize LLM tools.

The agency reiterated the importance of applying ethical principles and appropriate governance. In this vein, the UN health agency in 2021, published Ethics and Governance of Artificial Intelligence for Health ahead of the adoption of the first global agreement on the ethics of AI

- UN NEWS

Leave a Comment